With these rules in place when you copy a website it will scan all HTML files but only download to the save folder those matching the specified extensions. Both windows 10 desktop-software programs (HtTrack and Cyotek WebCopy) claim they can automatically render HTML-files of all webpages behind a login-page. Alternatively, you could just have a single rule which matched multiple extensions, for example \.(?:png|gif|jpg). Once a match is made there is no need to continue checking rules, so the Stop Processing option is also set. and then uses the Include option to override the previous rule and cause the file to be downloaded. * to match all URLs, and the rule options Exclude and Crawl Content.Įach subsequent rule adds a regular expression to match a specific image extension, for example \.png.

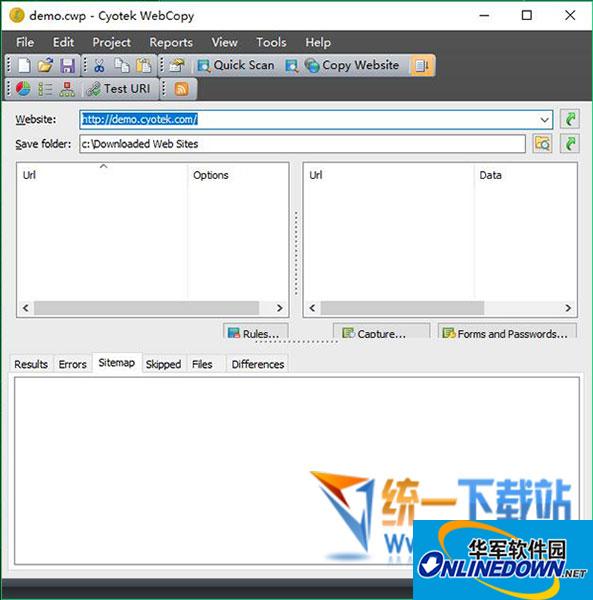

The first rule instructs WebCopy to not download any files at all to the save folder, but to still crawl HTML files. To do an image-only copy of a website, we need to configure a number of rules. What does Google do that those web copy tools don't The idea of this exercise is to both preserve a copy and discover things not yet linked. The subj tools Cyotek WebCopy and HTTrack cannot find those files, yet Google can: site: etc. This example follows from this and describes how you can use rules to crawl an entire website - but only save images. There are 'public' images and html files there that are not (ultimately) linked from index.html. In our previous tutorial we described how to define rules. Working with JavaScript enabled websites Older versions Advertisement Cyotek WebCopy is a very useful app that lets you download web pages to view them in full later, error free, and without an Internet connection.Using query string parameters in local filenames.Aborting the crawl using HTTP status codes.

Saving the current project with a new name.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed